Results

Figure 3.

Visual comparison of group activity retrieval on the Volleyball dataset (VBD).

For an R-set query (top), our method correctly retrieves an R-set video by capturing

player motion direction, whereas GAFL retrieves an R-spike video.

For an R-spike query (bottom), our method identifies the jumping spiker and blockers

via local dynamics features, while GAFL relies on static appearance similarity.

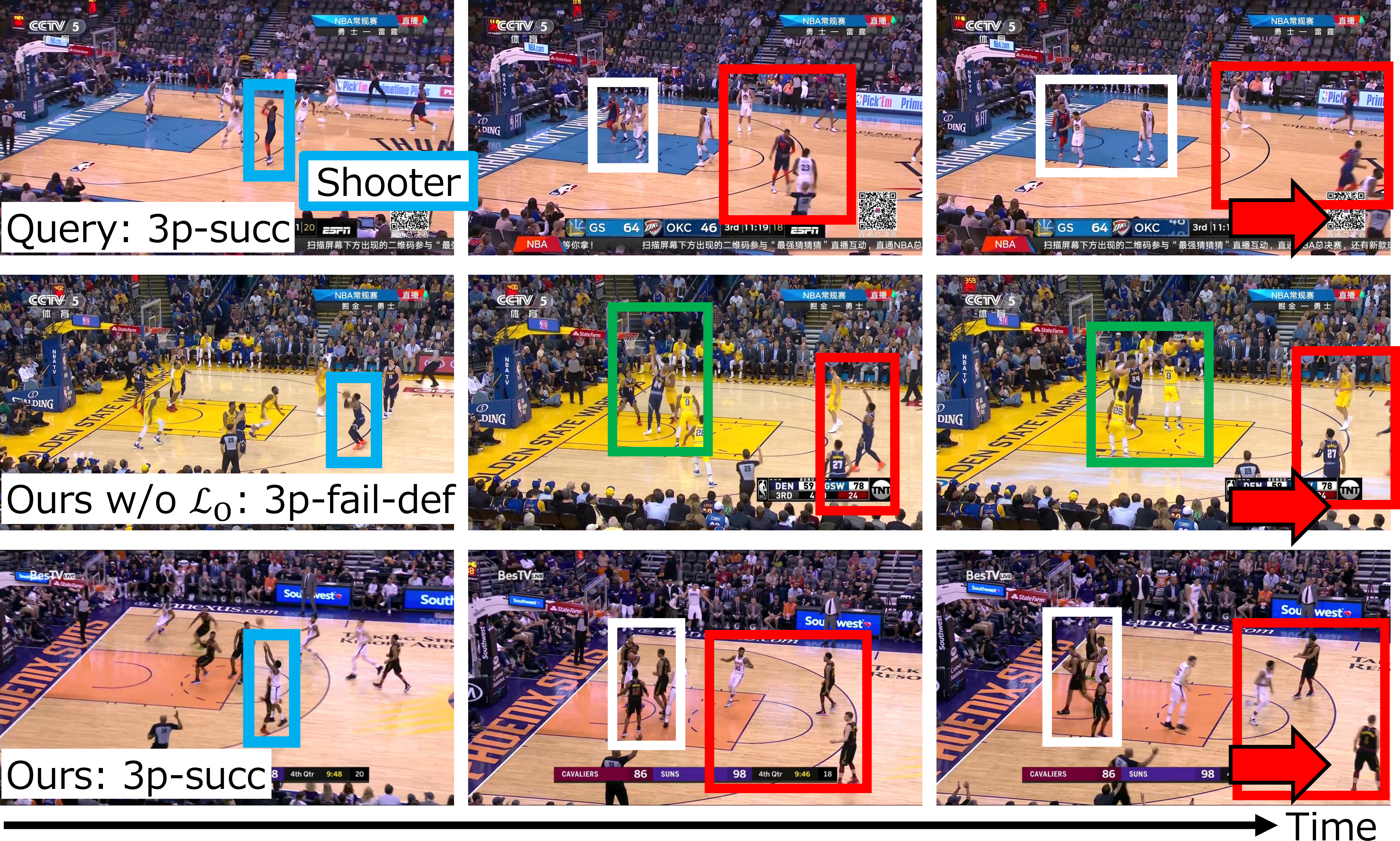

Figure 4.

Visual comparison of group activity retrieval on the NBA dataset.

For a 3p-succ query, our method correctly retrieves a 3p-succ video by capturing

global player interactions after the shot, whereas the method without LO

retrieves a 3p-fail-def video.

Quantitative Results — Group Activity Retrieval (Hit@K, %)

| Method |

VBD |

NBA |

| Hit@1 |

Hit@3 |

Hit@1 |

Hit@3 |

| B1-Compact [ECCV 2018] |

30.3 | 59.9 | 14.9 | 39.5 |

| B2-VGG19 [ECCV 2018] |

35.4 | 65.0 | 16.8 | 39.8 |

| HRN [ECCV 2018] |

31.2 | 57.6 | 15.5 | 37.1 |

| GAFL [CVPR 2024] |

61.1 | 82.4 | 24.7 | 50.4 |

| ★ Ours |

82.7 |

93.0 |

43.9 |

72.0 |

Table 1.

Comparison with state-of-the-art self-supervised GAF learning methods on VBD and NBA.

Our method achieves the best performance on all metrics.

Results in red indicate the best score per column.

Quantitative Results — Group Activity Recognition (MCA, %)

| Method |

Extractor |

VBD |

NBA |

| DFWSGAR [CVPR 2022] |

ResNet-18 | 90.5 | 75.8 |

| SOGAR [IEEE Access 2025] |

ViT-Base | 93.1 | 83.3 |

| Flaming-Net [ECCV 2024] |

Inception-v3 | 93.3 | 79.1 |

| LiGAR [WACV 2025] |

ResNet-18 | 74.8 | 62.7 |

| SAM [ECCV 2020] |

ResNet-18 | 86.3 | 54.3 |

| Dual-AI [CVPR 2022] |

Inception-v3 | — | 58.1 |

| KRGFormer [TCSVT 2023] |

Inception-v3 | 92.4 | 72.4 |

| MP-GCN [ECCV 2024] |

YOLOV8x | 92.8 | 78.7 |

| FAGAR [Pattern Recognit. 2025] |

YOLOV8x | 85.2 | — |

| ★ Ours |

DINOv3 |

93.9 |

73.0 |

Table 2.

Comparison with supervised group activity recognition methods on VBD and NBA (MCA %).

Our method achieves the best performance on VBD and competitive performance on NBA.

For a fair comparison, only group activity class labels are used as manual annotations,

and only images are used in inference across all methods.